Issue 23: Intel’s Pohoiki Beach and Disruption Theory

Why this looks like a case of disruption playing out in front of us

Back to some news and analysis before the next practical guidance. As ever, my goal is to introduce you to some of these notions and explain in simple terms how you can think about them going forward in your own Digital Transformation journey. I’m always available for consultation and would be happy to help out. Email me at info@dgtlfutures.com

If this email was forwarded to you, I’d love to see you onboard. You can sign up here:

On to the issue.

Intel, Disruption Theory and why it doesn’t bode well for them

Lately I've been studying quite a lot about Disruption Theory and trying to make sense of it in a modern digital context. So when I read that Intel had announced a specialised processor aimed at the research community, I thought this might be a clear example of the theory in practice rolling out in front of our eyes.

Today, Intel announced that an 8 million-neuron neuromorphic system comprising 64 Loihi research chips — codenamed Pohoiki Beach — is now available to the broader research community. With Pohoiki Beach, researchers can experiment with Intel’s brain-inspired research chip, Loihi, which applies the principles found in biological brains to computer architectures. Loihi enables users to process information up to 1,000 times faster and 10,000 times more efficiently than CPUs for specialized applications like sparse coding, graph search and constraint-satisfaction problems.

The problem with theories like these, is that it is pretty much the same problem we have when we discuss human or animal evolution. We find it hard to understand the future direction of the evolutive process in real-time, mostly because it happens so slowly and over many generations. From a retrospective position we can clearly see what happened and we can often even, have an informed guess as to the why it happened. Reliable prediction, it seems, is just out of reach of our capabilities. With modern digital technologies and the pace with which they evolve, we might just be able to see enough in to the future to discern and predict outcomes for companies in this new world.

Disruption Theory

It is firstly, important to explain what disruption theory is and where it came from. The ideas were first documented and argued by Clayton Christensen’s early works (co-written by Joseph L. Bower), with one in particular gaining traction in the academic world: Disruptive Technologies: Catching the Wave.

This essay published in the Harvard Business Review, was born out of the observation that businesses have a difficult time staying at the top once they’re there:

One of the most consistent patterns in business is the failure of leading companies to stay at the top of their industries when technologies or markets change. Goodyear and Firestone entered the radial-tire market quite late. Xerox let Canon create the small-copier market. Bucyrus-Erie allowed Caterpillar and Deere to take over the mechanical excavator market. Sears gave way to Wal-Mart.

Later in the book “The Innovators Solution”, Christensen outlines that businesses today are subject to pressures that force growth upon them. Putting it bluntly grow or die. Now, growth can come in different guises, it can of course be profits or turnover, it increasingly in the digital age other metrics like MAUs or Engagement, drive todays start-ups to explore ways to generate, sustain and increase that growth, all to their peril if they don’t — we’ve seen the tanks in stock value when they fail to reach the growth figures imposed upon them by Wall Street and the like.

The equity markets brutally punished those companies that allowed their growth to stall. Twenty-eight percent of them lost more than 75 percent of their market capitalization. Forty-one percent of the companies saw their market value drop by between 50 and 75 percent when they stalled, and 26 percent of the firms lost between 25 and 50 percent of their value. The remaining 5 percent lost less than 25 percent of their market capitalization.

Disruption Theory was developed to help understand this phenomenon and make sense of these pressures and help model how new businesses and business models tend to appear in markets, ravaging the incumbents.

The Innovators Dilemma goes in to much more detail than I can fit in this newsletter, and I thoroughly recommend you read it, but distilled to its essentials, DT tells us how under certain circumstances, most effort is placed in maximising profits, with resources and innovation aligned to getting better profits to satisfy the markets which in turn causes otherwise well-run operations to start to fail and to eventually get killed in a deeply cut-throat world.

The (relatively) new entrants

Intel is a well-run long-established microprocessor design ad fabrication company, with a phenomenal marketing arm and deep links to the most important companies in the computing industry. Founded in 1968, a year before AMD, it has run head to head with AMD and in nearly every battle beaten AMD on just about every level that means anything; marketing, price, availability, design, availability, etc.

The new entrant in the microprocessor market in known as ARM, or as it was previously known, Advanced RISC Machine and before that Acorn RISC Machine — giving you an idea to its origins, powering Acorn Archimedes personal computers. Founded in Cambridge, UK, in 1990 (22 years after Intel), the processor design was a complete revolution and rethink of classic processor design, with the clue in the companies’ original name; RISC.

RISC means Reduced Instruction Set Computer. The Instruction Set of a processor is a fundamental element to how the processor behaves but more importantly, how it is directed to do things, what is more commonly known as programmed. Modern terms such as coding are basically the same things. Different microprocessors can have the same instruction set, allowing programmers to write the same code, or for the compilers — software designed to turn more human-readable code into native machine language, that is virtually impossible to understand as a human — to translate into the same instructions.

Compared to ARM, Intel microprocessors are CISC, Complex Instruction Set Computers. Without going in to microprocessor design, an instruction is a type of command run by the processor to achieve a desired outcome, like a multiplication, division, comparison, etc. Complex instructions can be of variable length, take more than one processor cycle to execute (processor cycles govern how fast the microprocessor can operate), but are more efficient in memory (RAM) usage. RISC instructions are more simplified and standardised, and critically, take only one processor cycle to execute. They have trade-offs, managing memory (RAM) less efficiently and require the compilers to do more work when translating the code into machine language, i.e., potentially slower development times whilst waiting for the compilation to finish.

The memory issue was only an issue up until recently, when memory has become effectively abundant and cheap, allowing hardware designs to incorporate huge amounts of RAM in their designs.

Ignoring the up and coming

Intel has largely ignored or had difficulties recently, responding to the threat that ARM provided. ARM processors were smaller, more efficient in terms of power requirement and often faster than equivalent Intel processors designed for static, electrical sector-powered computers like the desktop computer you may be reading this on.

But the world changed, and mobile started to take over in unit sales a number of years ago. Intel responded with underpowered, more expensive and generally all-round disappointing designs for mobile workloads, the original Intel Atom being one example.

ARM, on the other hand, had the presence of mind to license its modular designs to different microprocessor manufacturers — Samsung being the most successful and well-known — but also interested parties looking to get an advantage in their own hardware designs, the most famous being, of course, Apple. The iPhone is powered by an Apple designed ARM processor, and its last iteration the A11 has been shown to be even faster than Intel’s desktop-class processors. The iPad I'm writing this on has beaten almost 95% of all laptop computers for sale in 2019! More devices are being designed to fit environmental constraints like power, size etc., and the natural choice seems to be ARM over Intel. Intel’s announcement is a late entry in to the IoT (Internet of Things) wave, where applications like Autonomous Vehicles, Security and Smart Devices are becoming more prevalent.

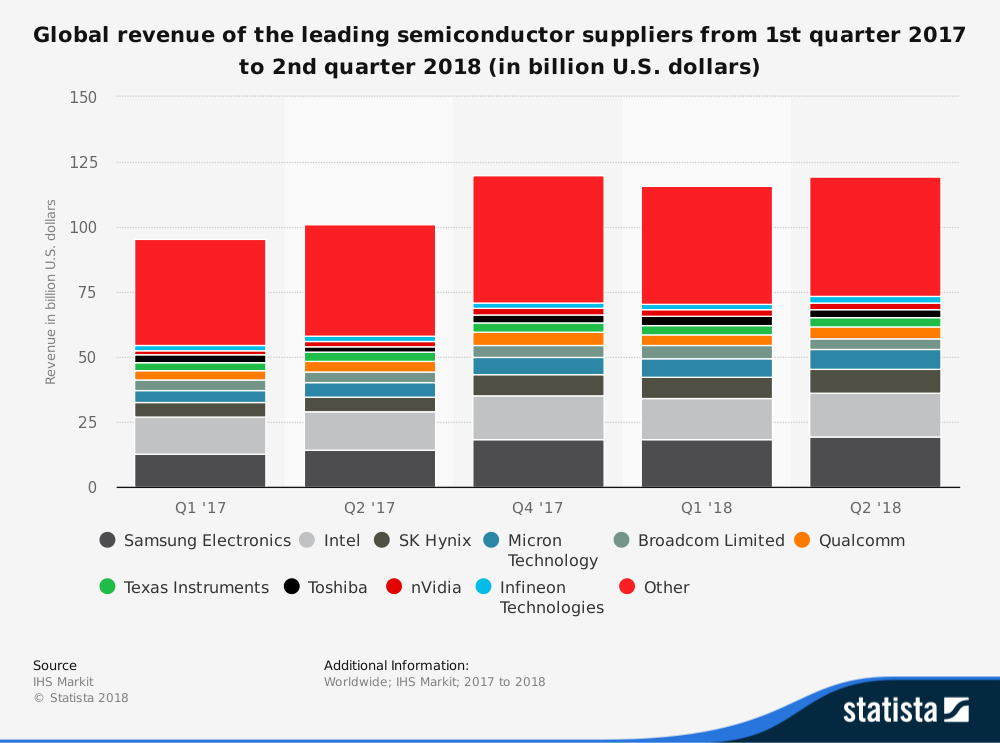

Today, ARM processor-based designs sold by Samsung, Qualcomm etc., clearly outsell Intel, as shown in this Statista chart:

Source: Statista

The response

According the DT, the oft-used response by the incumbents, is to go for more profit by uplifting its products to a higher class. It would appear that in the face of competition from ARM — who’s ambitions have been publicly announced, take 10% of the desktop/laptop market by 2022-23 — and indirect competition from the operating system and application developers like Microsoft, who have recently added and promoted support for ARM designed systems for handhelds, IoT and the like.

This announcement falls neatly into that category, by providing a high-class, limited availability design for universities and research centres, Intel probably expects to sell them at a greater margin, compensating in part the revenue losses to the more downmarket sales in basic computing devices like tablets and laptops. This is a step in to what is described as seeking sustaining capabilities, technologies and business model that maintain or increase profits, as described by Christensen, and is a clear sign of the disruption having some impact in the incumbent’s bottom line.

The risk

If we follow DT to its conclusions, it possible to see the risks Intel poses for itself, namely being innovated out of business. I’m clearly not suggesting that Intel will fail next year, but I think the long-term future is at risk if there is not some kind of reaction, with Intel creating further opportunities. DT shows us that disruption is an inevitability and it is better to be your own disruptor rather than being disrupted yourself. The mechanism is described in the aforementioned book, The Innovator’s Solution:

Once the disruptive product gains a foothold in new or low-end markets, the improvement cycle begins. And because the pace of technological progress outstrips customers’ abilities to use it, the previously not-good-enough technology eventually improves enough to intersect with the needs of more demanding customers. When that happens, the disruptors are on a path that will ultimately crush the incumbents. This distinction is important for innovators seeking to create new-growth businesses. Whereas the current leaders of the industry almost always triumph in battles of sustaining innovation, successful disruptions have been launched most often by entrant companies.

Apple successfully disrupted its amazingly successful iPod business by another, even more astronomically successful business, the iPhone. In fact, there still has not been a product as successful as the iPhone in the history of the world.

The types of innovation

To close out this issue, I thought I’d just introduce the notions of innovation, as presented by Christensen.

Sustaining innovation, basically entails making a better product from the one you’re currently producing. That can either be thought more efficiency, lowing costs or even improving incrementally the value proposition. In fact, sustaining innovations are often so compelling to an incumbent that they often ignore the disruptive innovations completely.

That being said, a disruptive innovation is one that starts with a different take on the currently available product or creates a new category of product not previously seen, often in a form that is what is called an MVP, minimum viable product. It is then iterated upon over and over until it becomes a formidable competitor to an established product and thus taking market share or profits from the incumbent.

You don’t have to be restricted to one or the other exclusively, and in fact smart companies like Apple use a combination of both sustaining and disruptive innovations to their advantage. Iterating on a tried and tested cash cow, the iPod became so popular that Apple was often quoted as “the iPod company”. That was until Apple disrupted itself with the iPhone.

Reading List

Bitcoin tumbles as U.S. senators grill Facebook on crypto plans

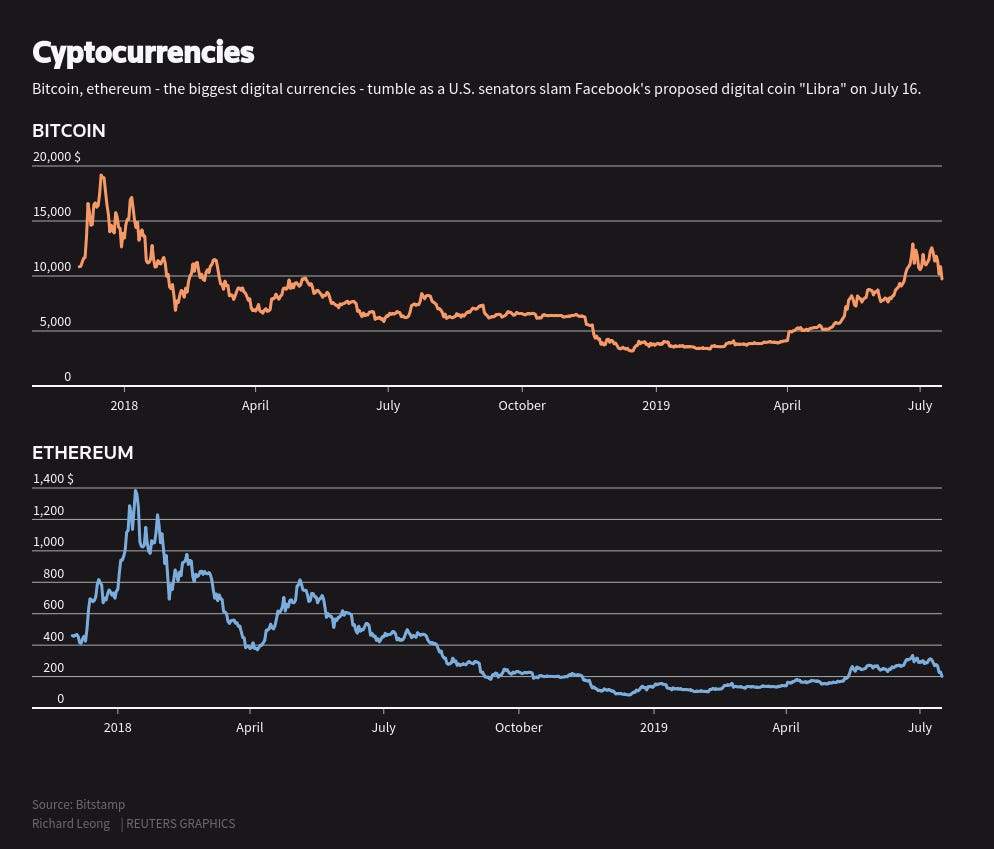

As a follow-up to Issue 19: Blockchain ≠ Cryptocurrency, the impact of the announcement by Facebook of Libra, has been felt far and wide.

During the hearing, a U.S. senator said Facebook was “delusional” to believe people will trust it with their money.

Bitcoin was not the only cryptocurrency affected ed either, Etherium lost over 13% and others lost around 8%. Bitcoin had been gaining since April this year but has been trading at 4 times lower than its peak value of just shy of 20,000 $.

Image source: Reuters

The Future is Digital Newsletter is intended for anyone interesting in learning about Digital Transformation and how it affects their business. Please forward it to anyone you feel is interested.

You can read all the back issues here:

Thanks for being a supporter, have a great day.