Issue 18 : A step-by-step example of extracting value from unstructured data

Using some basic statistical analysis, you can extract value fairly simply

Bienvenue/Welcome to all the new subscribers. Thank you so much for signing up. Don’t forget you read all the archives here. Let me know if you have any comments.

On to this Issue.

After the last few issues giving you practical advice on your data’s worth, I thought I’d take a different direction and give you a step-by-step tutorial in basic data manipulation, with the aim to extract value from it. It’s a fairly simplistic example but on that shows you what you can do with a little patience and the techniques I ‘m showing here. Bust out your calculators, it’s going to get deep 😉Enjoy.

Gaining insights from basic, unstructured data

For both personal and business reasons, I’ve been journaling for a number of years now but have recently slowed down. I first got into it after reading numerous articles from people I respected in the business world and a general interest for well-built applications that achieve brilliantly their goal. The application that got me started and really piqued my interest, was an app called DayOne. It’s a lovingly designed application that really helps you get your thoughts written down. Being that this newsletter is not a review of the application, I won’t go into how the application works and its features but looking at this review will give a great overview.

To that end, I’d noticed that recently I’d stopped journaling or hadn’t been as regular and rigorous as usual, and I wanted to know why. In the spirit of extracting value from raw unstructured data (call it Big Data if you will), I set out to analyse my journaling from an analytical point of view. As any analysis should be done, I did have in mind a goal. First there were several questions to answer, then an analysis to see if I could “nudge” (see Side Bar - Nudge Theory) myself in to better journaling or at least better regularity.

A quick note, whilst I’m fully aware that this is not specifically “business data”, its serves as an illustration how some simple data can reveal more information than you may have, at first, thought about.

Some of the questions I wanted answered were designed to help me get back on wagon, so to speak. Let’s have a look in detail at each question.

Essentially there are two big questions that required answering:

When and why have I stopped journaling?

What could I adjust to incentivise me into more regular journaling journaling?

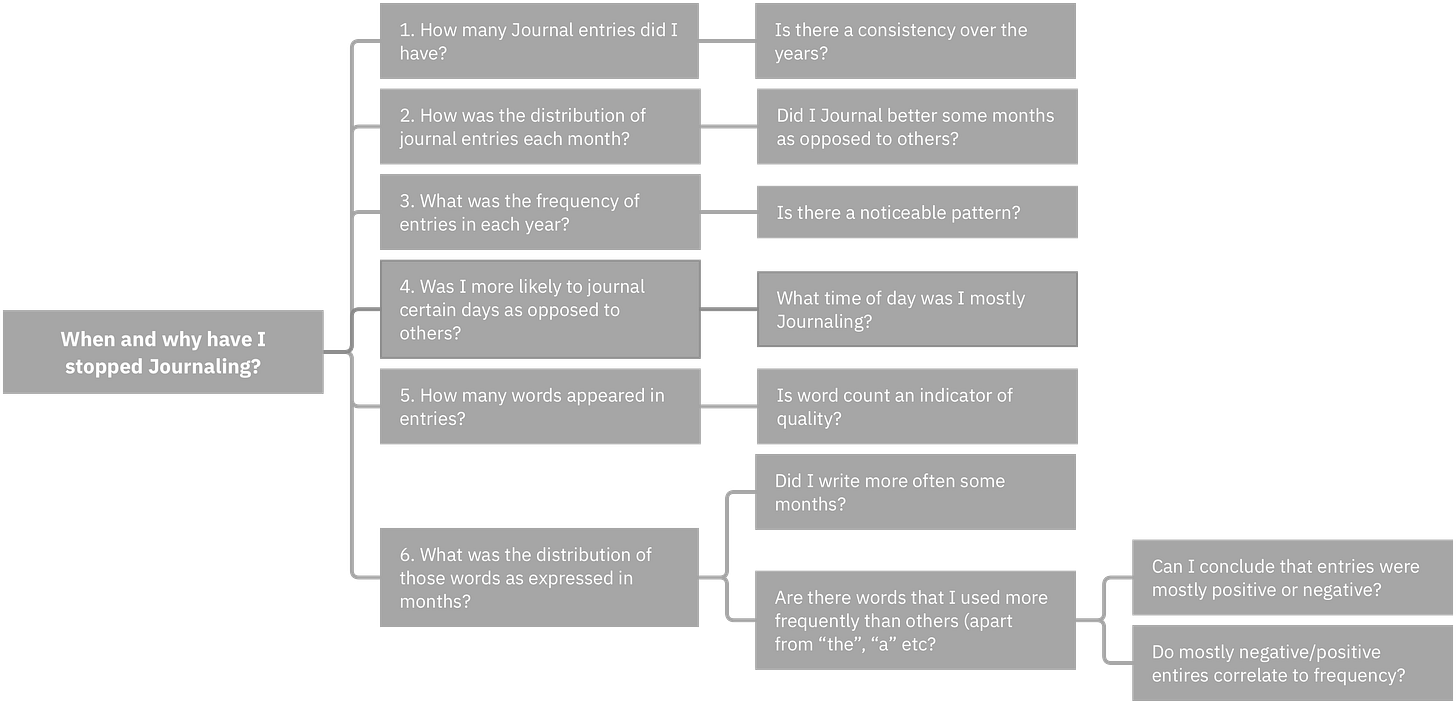

In this first part, I’m concentrating on the first question as it is the basis on which to answer the second question. To make things legible, I created a MindMap of the full question and sub-question list related to my first interrogation.

The mind-mapped query tree

Phew, that’s a lot of questions for something that on the face of it sounds very simple. In this Issue I will delve in to questions 1 through 4, and dedicate another issue to question 5 and 6, which in analytical terms require more effort, and I’d like this newsletter not to serve as a soporific! Before we dig in to the details, I developed a six-step plan to get me towards answering the question tree and possibly resolving the base issue; how could I tweak things to incite me to journal better and more often?

My Six-step plan

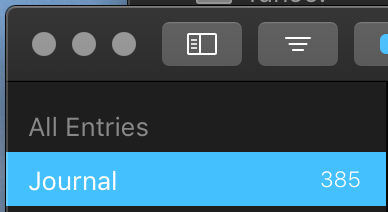

Getting Data

Getting the data is generally a simple process and you should be able to find useful data without too much trouble. My case was no different. I had a journaling app, it had entries, 385 to be precise, so all I had to do was export it. Luckily for me, DayOne features an export function and offers several export formats in which to export. As I was about to manipulate data I chose .txt (Plain Text). It would have been easier in a .csv (Comma Separated Values) format, but it didn’t stop the process. The data was in fact rather oddly structured, partly to be human-readable I guess, but it required some work done to it to get it into a useable state. More on that later.

From a business perspective, many applications will offer an export function in to various formats, but if the application you’re working with doesn’t a quick exchange with support will likely provide the data you’re looking for.

Choosing Analysis tools

Now I had some raw data, albeit in an unusable format for the time being, I set about seeing what data analysis tools would be best suited. Without getting in to a big philosophical discussion, there were two obvious candidates; Microsoft Excel and Microsoft PowerBI. I’ve used both previously and appreciate both systems for different reasons, but in this case, I thought that the easy-to-use data manipulation tools built-in to PowerBI would suit my needs better. In some cases, further statistical analysis might require the use of R to better dig deeper in the data.

Alternatives to PowerBI exist, here’s a list of some:

Tableau (a free to use edition called Public is available too)

Data Munging

The next step was to do what is called “Data Munging”. The wikipedia definition is:

...the process of transforming and mapping data from one "raw" data form into another format with the intent of making it more appropriate and valuable for a variety of downstream purposes such as analytics.

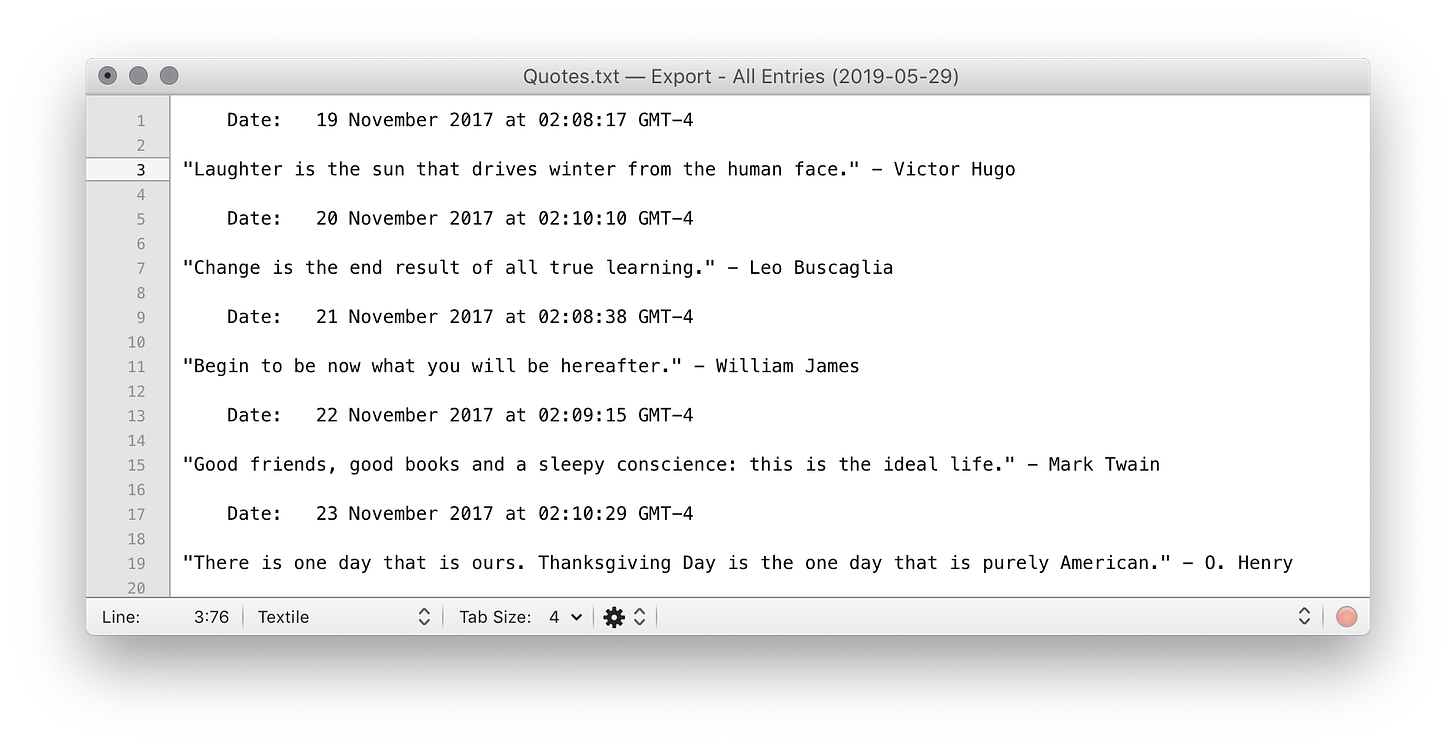

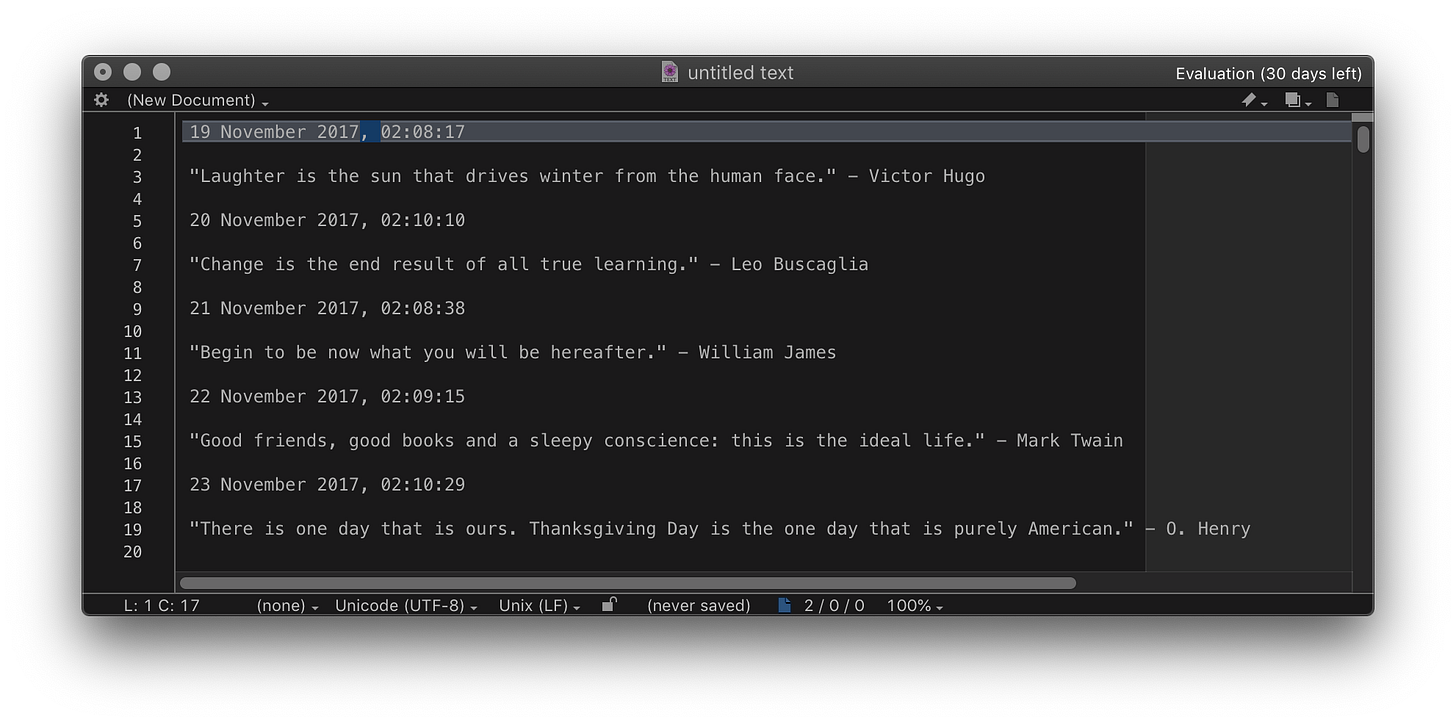

This is also referred to as Data Wrangling and the two terms are interchangeable. Whilst I was not exactly transforming the raw data into another format, I was certainly cleaning the data up and rationalising what to keep. Below is a sample export from DayOne from a journal that collects daily quotes.

The raw data exported from DayOne

Looking at the data, we can see several problems that need to be fixed in order to exploit it. For example, there are no specific delimiters (Tab, Space, Comma, etc.). The date and time are mixed on the same line with “ at “ in the middle and appended to the end is the time zone. Two lines down we see the contents of the journal entry, in this case on one line, but a larger journal entry will span multiple lines. The munging involved cleaning this up.

Using text find/replace tools it was a simple matter of getting rid of “ Date: “, using a replace string of nothing. Performing pretty much the same effort to remove the “ at “. Then finally removing the GMT. I did, however, take advantage to insert a comma + space after the date to create a quasi .csv file to import into Microsoft Excel for the next phase of the munging.

The start of the clean-up process

Importing and Rationalising Data

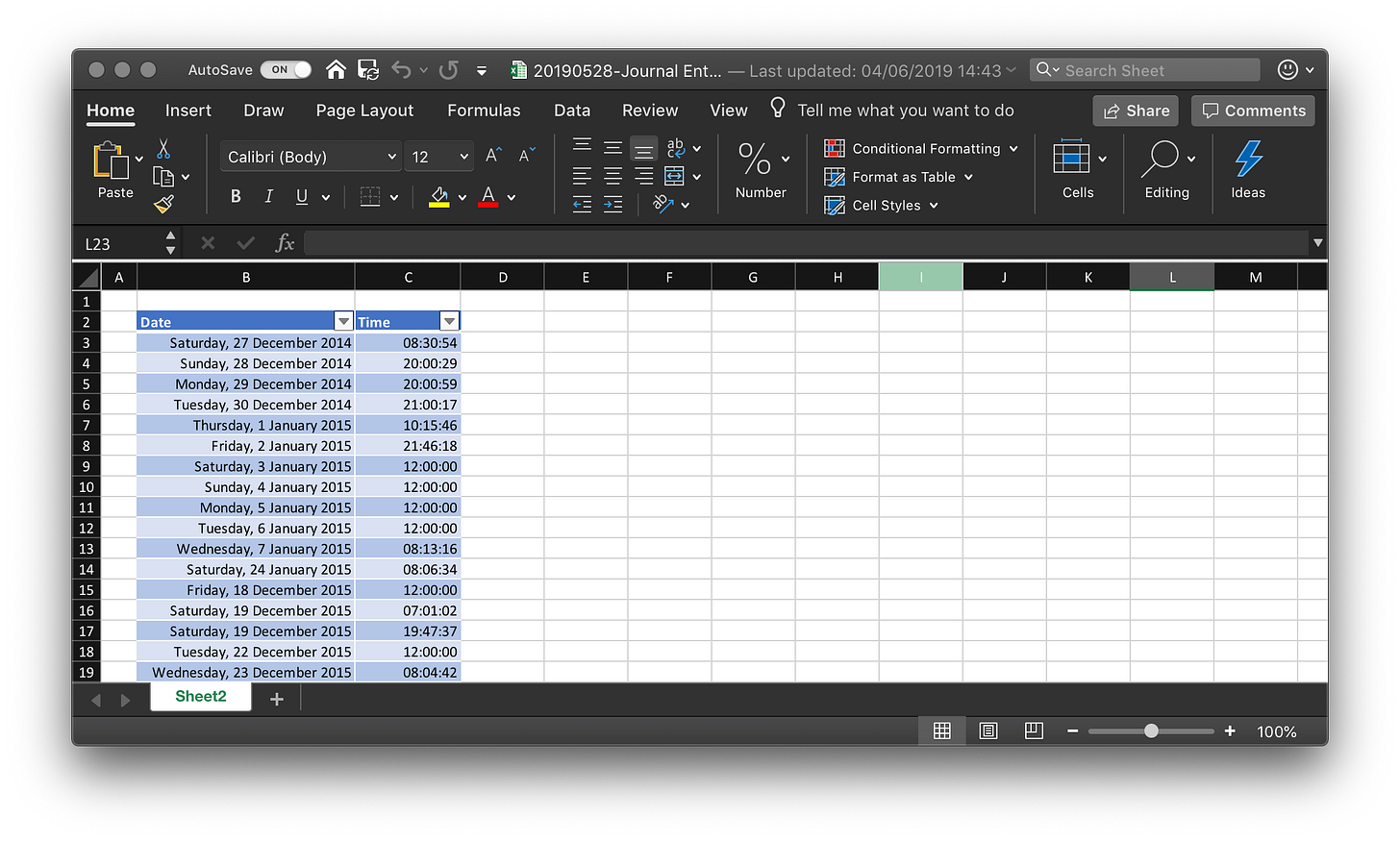

Importing this in to Excel gives us a formatted file with the date and time separated by columns, with the text data and extra lines occupying the areas in between these dates and times. For the purposes of this exercise I chose to remove all the text data and leave the two columns. The end result, formatted as a table and sorted by date as presented in Excel:

The cleaned-up and structured data, ready for use in PowerBI

Now with this data cleaned up, it was time to start extracting value from the data in the chosen analysis tool, Microsoft PowerBI in this case. PowerBI is a simple and very powerful tool to help you import and work with not only one data source, but multiple simultaneously. Once the data is imported to PowerBI you can create relationships using simple drag and drop tools. For example if you were trying to understand the relationship between Ice Cream sales and the weather, the table with Sales data (including the dates and times) could be ‘joined’ by a relationship to the weather table — data that was imported from your local weather bureau, for example. Mapping sales again weather becomes a trivial matter from thereon. Let’s have a quick look at my simple one-table data and see if I can answer some of the above questions.

Applying Visualisations and Analysis

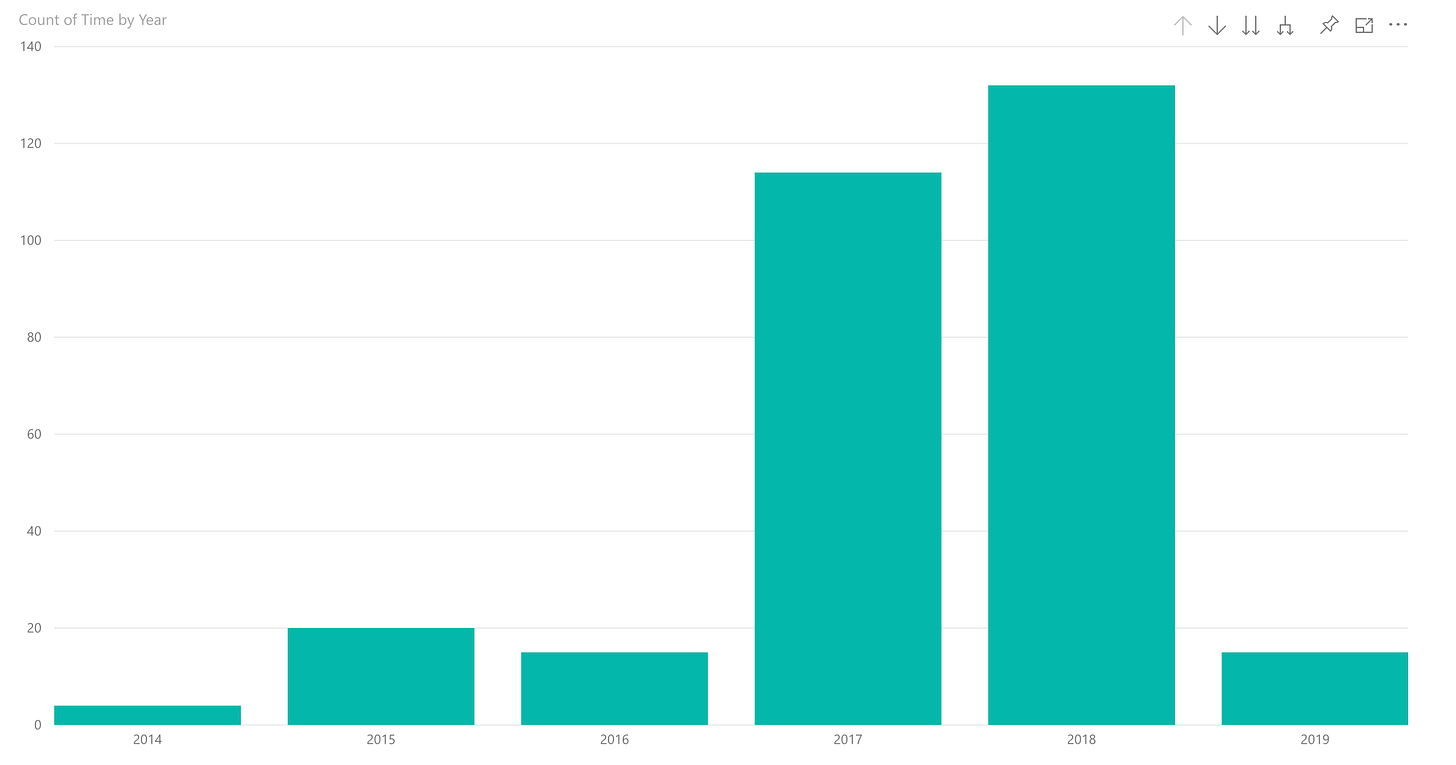

The first question I wanted to answer was the distribution of entries and if there was consistency in this. The following visualisation is pretty self-explanatory. Clearly, I started in earnest in 2017 and continued in 2018, with a big fall off in 2019. Yes, consistency for 2 years but a drop off recently, even when adjusting for the fact that 2019 is not yet half done. This one histogram answers questions 1 and 3 from the above MindMap (Is there consistency over the years? and Is there a noticeable pattern?).

Count of journal entries by year

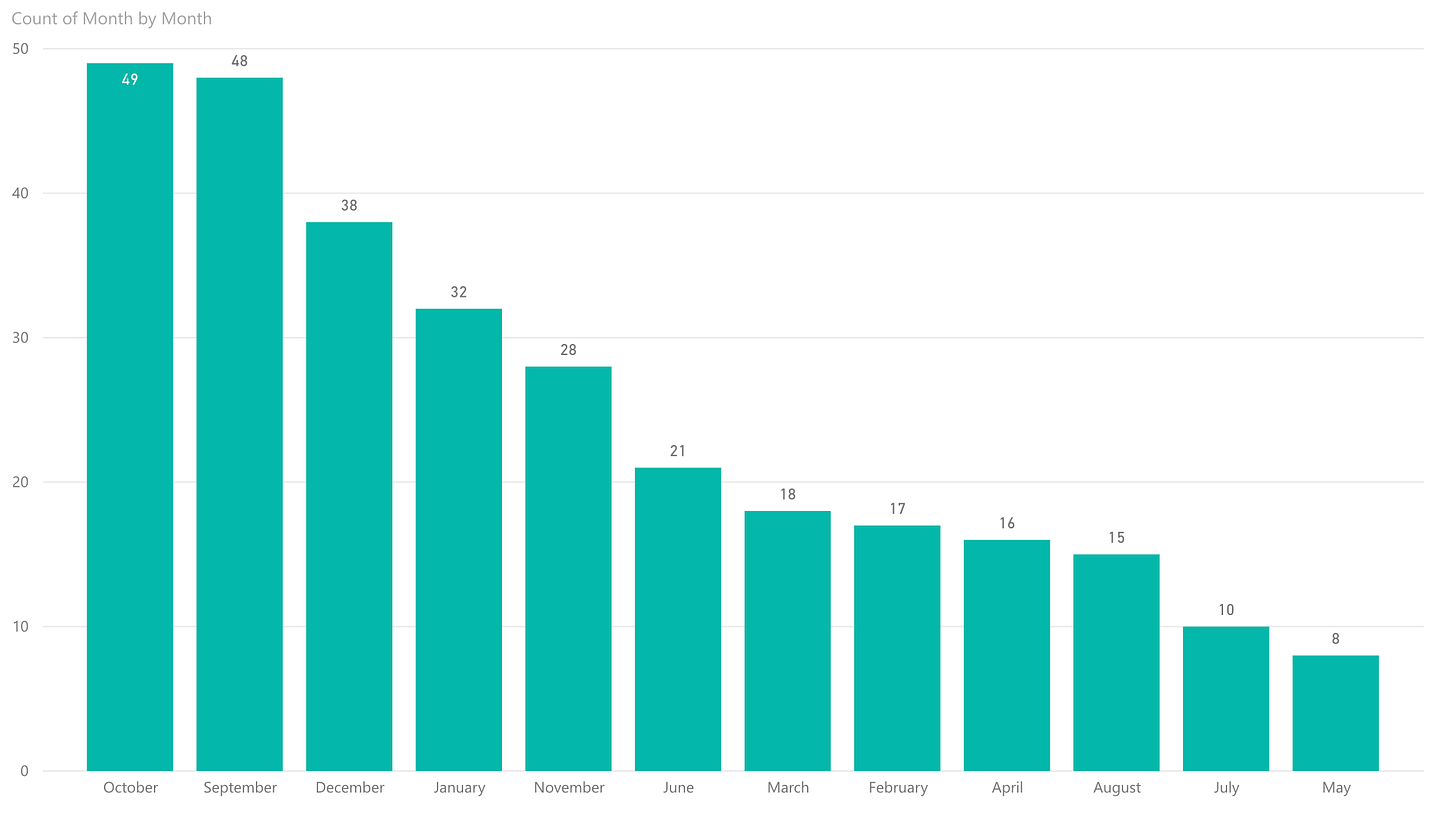

In answering question 2 (did I journal more in some months as opposed to others?), the following histograms were created:

Count of journal entries by month (all years)

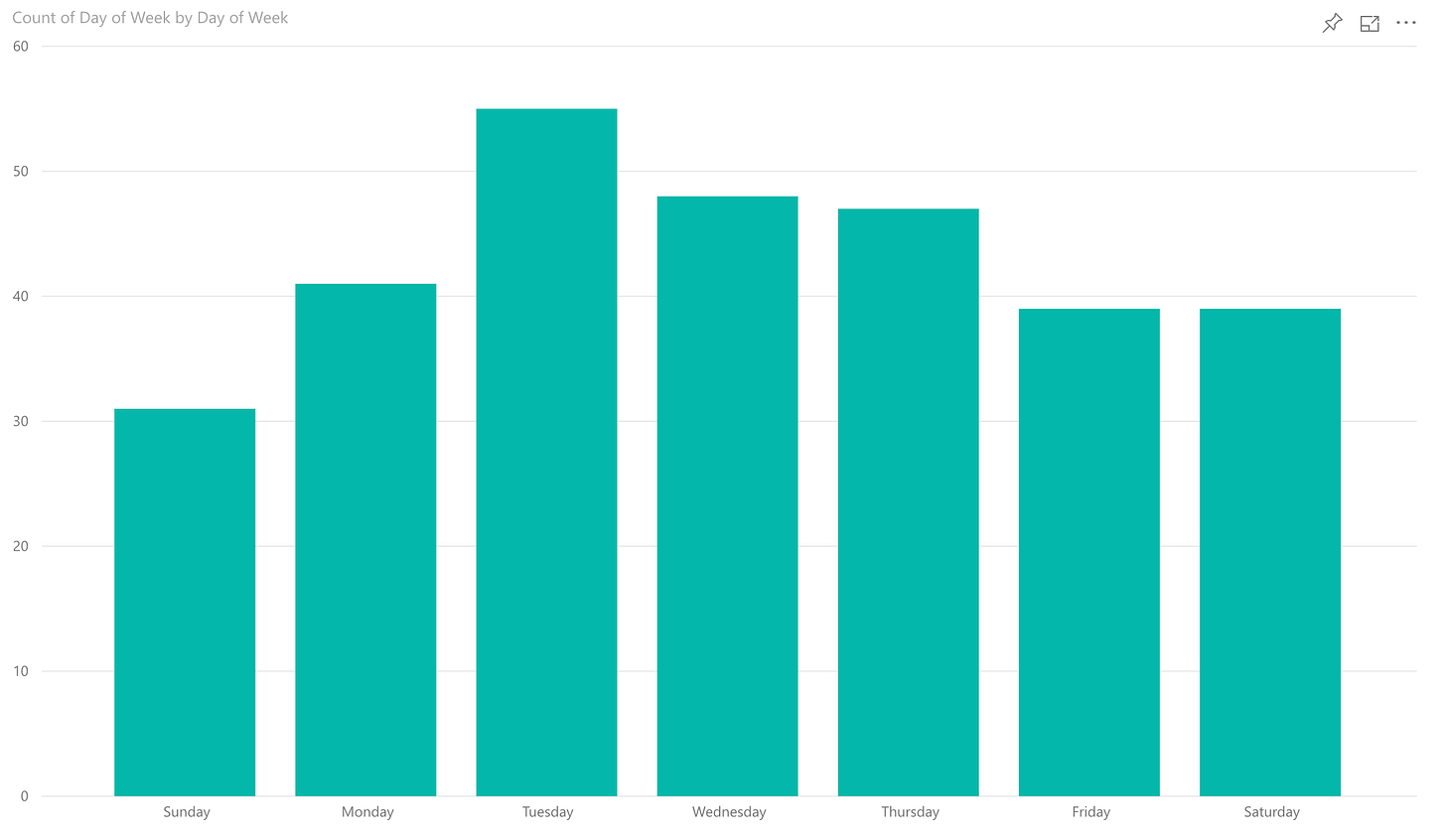

Count of journal entries by day (all years)

Again, pretty self-evident, I’ve been more consistent in some months compared to others (October, September and December). There are other inferences I can ascertain too. For example, I’m not subject to a rush of New Year’s resolutions, deciding to do something in the New Year then quickly dropping out (Gym membership offers play on this fashion), Tuesdays seem to be the day I journal most often with Wednesday and Thursday coming close in frequency with Monday, Friday, Saturday and Sunday being days I miss more often, I peak in the middle of the week if you will, thus half-answering question 4 (Was I more likely to journal certain days of the week?).

The other half of the answer came from the following histogram:

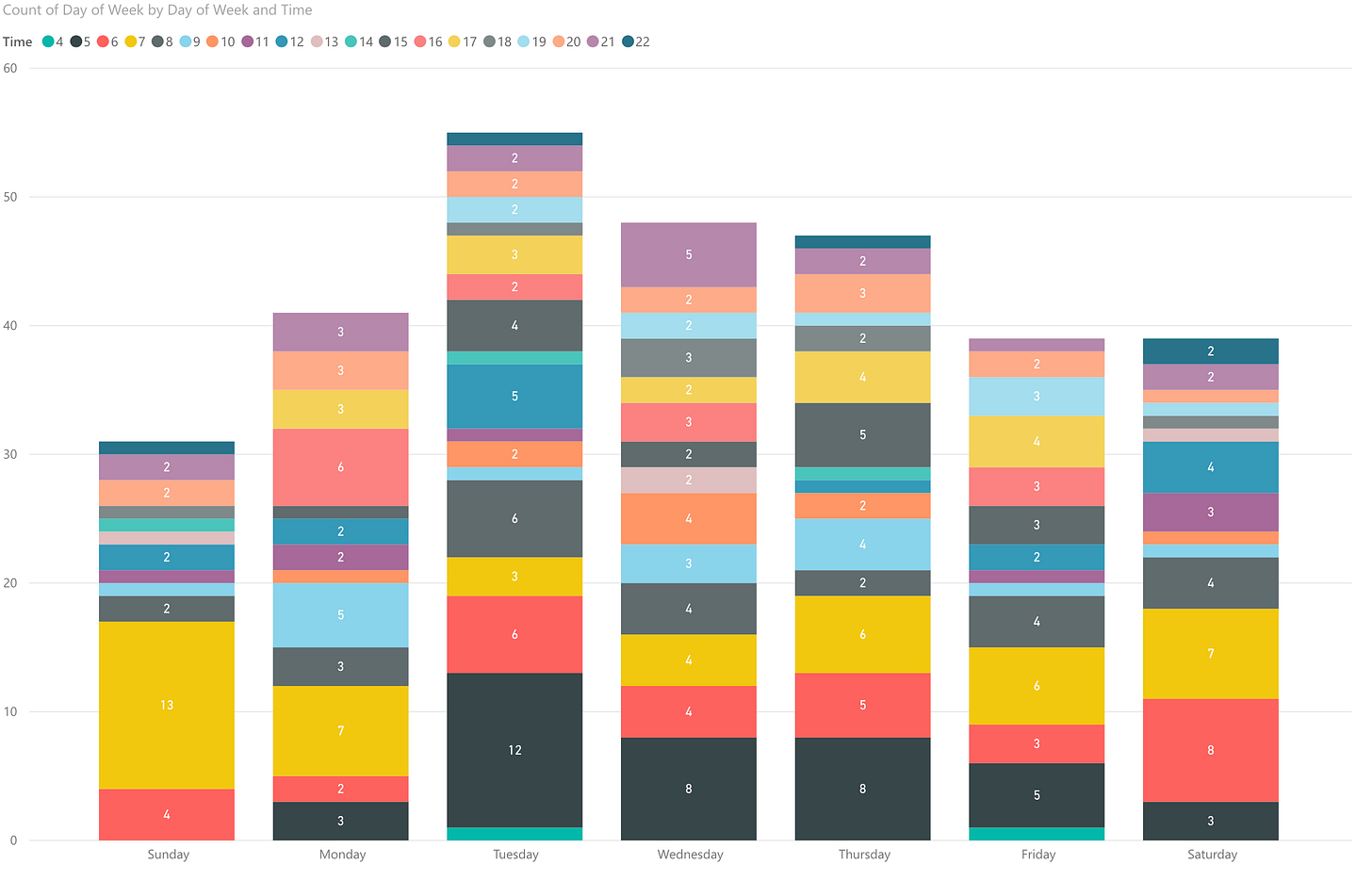

Split of journal entries by day of the week and the hours at which the entries were made

It’s a little difficult to read, as the data is a little spread out, but essentially we can concur that I write most entries in the morning, between the hours of 5am and 8am - to better understand look at the key in the top left of the image which show the title of day in blocks on 1 hour. Yes, I have written in the journal around 4am! So, I’m a morning person. I already felt this was the case, but to have it all but confirmed by data is interesting. Depending on the type of task at hand this kind of data can help you schedule when to attempt those tasks. For me, the morning is better for more reflective tasks, thinking and writing.

Just to cut the data again into another monthly analysis, the following histogram was created:

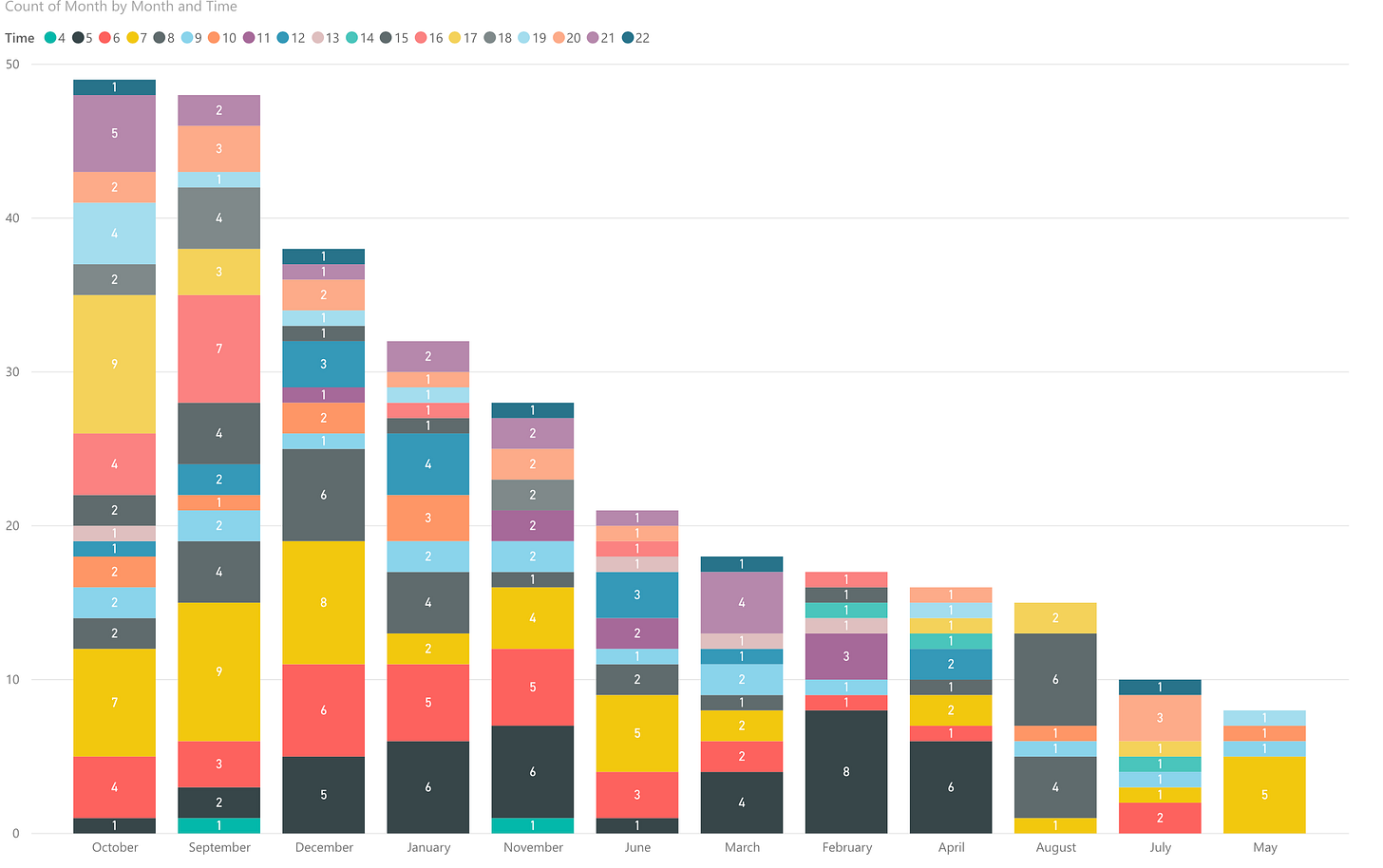

Split of journal entries by month and hours of the day of the entries

By month, the one stand-out thing that needs investigating is, why do I have more entries in the afternoons on the months that I made more entries? This, admittedly simple and silly example, does in fact show how simple data can help us make better analysis by revealing things that wouldn’t necessarily be seen when using standard tools like tables in Excel. But… Beware of causation and correlation (see Side Bar - Causation versus Correlation).

Conclusions

The big takeaway I wanted to impart, is the possibility to reveal interesting and useful facts from even the simplest of data sets using modern tools. Digital Transformation calls for us to gather more data and this is one of the reasons why. This long issue only dips in to the possibilities, in fact questions 5 and 6 on the list would be the next logical steps to take. To do I would need to modify the table with columns for the number of entries and the word count of each entry. Measuring, for example, negative or positive type word counts could be used to gauge overall mood in the entries, with the obvious caveats. But you can see how a simple multi-column sheet can provide rich insights. As, I mentioned before, joining other datasets to this model could provide even better analysis (Weather, GPS, etc.) … possibilities!

Side Bar - Nudge Theory

Nudge Theory is, according to this wikipedia article:

Nudge is a concept in behavioral science, political theory and behavioral economics which proposes positive reinforcement and indirect suggestions as ways to influence the behavior and decision making of groups or individuals. Nudging contrasts with other ways to achieve compliance, such as education, legislation or enforcement.

Nudge Theory has its roots in cybernetics and clinical psychotherapy before being more formally and scientifically described sometime after 1995.

Side Bar - Causation versus Correlation

When looking at data it is imperative that you understand the differences between causation and correlation. It cannot be assumed that something “caused” the other thing because it’s correlated, even when the histogram shows it is. Further analysis should be done to try to disprove the cause, and when you can’t you can have a little more knowledge to believe the cause, but not 100%. Conflating the two is a classic error in data analysis and one to be avoided at all costs.

To better understand the differences have a read of this article that picks apart, as one example, a very serious article in the Economist that suggested that Ice Cream consumption was related to IQ, i.e., the more frequently eaten the high the IQ… I’ll let you discover it for yourselves.

Reading List

Metadata is the biggest little problem plaguing the music industry

A great article from The Verge, on the other side of data usage and its potential for harm to copyright holders.

The Future is Digital Newsletter is intended for a single recipient, but I encourage you to forward it to people you feel may be interested in the subject matter. Thanks for being a supporter, have a great day.