Issue 15 : Part 4 - Practical steps towards your Digital Transformation journey

Data, data, data

Good day everyone. As promised, back to a practical issue, this time less generalist and more centred on purely digital initiatives. Hope you enjoy it, let me know, don’t be shy.

On to this issue.

Data is the new oil

If nothing else, Digital Transformation is inseparable from data, not just any data, but good data and data that can be exploited in the short term as well as the long term.

Data is the new oil in the digital economy

Although there is some dispute in the reality of the phrase, with some reasoning that it’s not, the phrase holds true for many businesses looking towards digital transformation. Businesses produce data all the time, but it is mostly lost, stored but not accessed or downright under-exploited. We are data-rich but analysis-poor, and it’s to our detriment. On the other extreme, data is not God, so some restraint and sensible treatment is necessary. I’m getting ahead of myself, so let us look at data and how we can, firstly, identify data to capture then develop data capture strategies.

Where is the data coming from?

Before digital systems were the norm, captured data was organised and designed in a systematic manner, with businesses specifically thinking about what it was they wanted to retain. Investments in the tools necessary to measure data were onerous and notoriously unreliable.

First Building Management Systems (BMS) developed by the likes of Sauter and Johnson Controls amongst others, were simple systems based on Programmable Logic Controllers or PLCs. A far-off thermostat would send (infrequently often) a signal to the central until that would apply a simple logic then actuate the command on another basic module to apply the remedial action. High temperature in the main meeting room due to human activity would trip the thermostat to send a signal to the central until which would in turn look at the PLC code to send the start fans and send cool air to the room (I’ve highly simplified it here, but you get the picture):

IF HIGH TEMP THEN OPEN COLDWATER VALVE AND START COOLING FANS

In more operations roles, data would be extracted from systems such as the Payroll and in some cases specific market surveys were published to generate data, but nothing like the amount of data we produce in the modern world. In fact, data is being produced not only in vastly larger quantities, but that data is being generated automatically whether we want it or not.

The applications, devices and sensors that have been deployed since the early 2000s, there is a near constant avalanche of data being produced on anything from the length of time you slept to detailed nuances of the movement of people in a particular corridor of a shopping mall. Social Media additionally generates tons and tons of information about us and our surroundings. The whole supply chain from development to the final death of a product is sourcing data all the time.

The indications are that this rate of increase is not slowing but increasing. What was benign data may be turned in to extremely valuable data in a short period of time. The Facebooks and Googles of the world know this and are making it easier to capture and analyse data internally and making much of their tools available for the general public.

The difference between then and now

The data projects of the past were designed and implemented to strict rules and guidelines and the data produced was subsequently structured and used to fulfil basic objectives. To give you an idea of exactly what structured data is let’s first look at its definition according to techopedia.com:

Data conform to fixed fields. That means those utilizing data can anticipate having fixed-length pieces of information and consistent models in order to process that information.

We can see that structured data has specific attributes that let us or our computers know beforehand how that data will appear, allowing us to apply processing easily. Examples of structured data are elements such as the information on your Passport; Name, Age, Place of Birth, Expiry Date and other basic simple fixed data types.

Modern data, on the other hand, is unstructured and is defined by techopedia.com as:

Unstructured data represents any data that does not have a recognizable structure. It is unorganized and raw and can be non-textual or textual. Unstructured data also may be identified as loosely structured data, wherein the data sources include a structure, but not all data in a data set follow the same structure.

Examples of unstructured include the data generated by corporate Customer Relationship Management (CRM) systems or the previously mentioned Social Media applications like LinkedIn, Twitter and Facebook. Although the data generated is classified (name, amount spent, next call-back date etc) the data is loosely tagged rather than forced in to specific lines and columns in a spreadsheet. In fact many modern systems deliberately unstructured data to push the boundaries of what can be extrapolated with these datasets, sometimes producing surprising results.

Data capture strategies

Let’s first look at some of the places you can find data relevant to your business. There are many sources and clearly, I can’t list them all and quite frankly I don’t know of all of them either. But there are some basic examples to help you get started on your own data research.

Google Trends

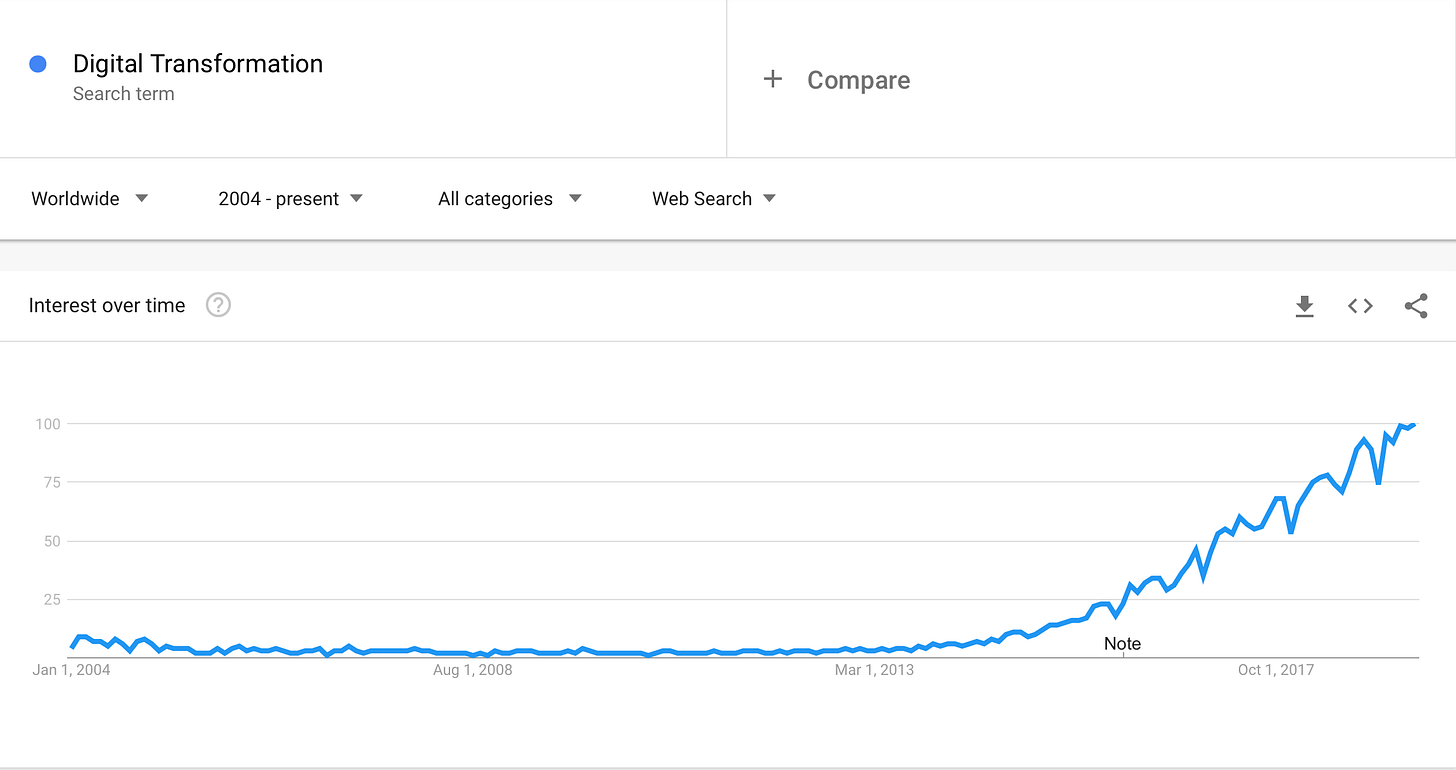

Google Trends is probably one of the most well-known. It’s a free service to all comers, and you don’t even need a Google account to benefit from its information. Simple to use, the interface is about as Google as you can get. Type a word or two and Google Trends will tell you how they are trending in Internet search over the last several years. Google Trends ranks its data on a scale from 0 to 100. 100 being peak interest on the subject and 0 either meaning 0 interest or no data was available. In the following example, I searched Digital Transformation and set the time span to “2004 - present”. This was the result:

Source : Google Trends

Digital Transformation is at peak interest currently, which is logical, but didn’t start becoming of interest until around 2014. That in and of itself is an interesting data point, Digital Transformation is a fairly new phenomenon. Additionally, you can pit search term against search term to gain insights in their comparative interest. Useful to judge interest in one type of product versus another. Being that this is not a lesson in how to use Google Trends, I won’t go into too much detail, but you should know that the data can be displayed by region and you are automatically suggested related queries, again giving you an idea of the things people are searching for.

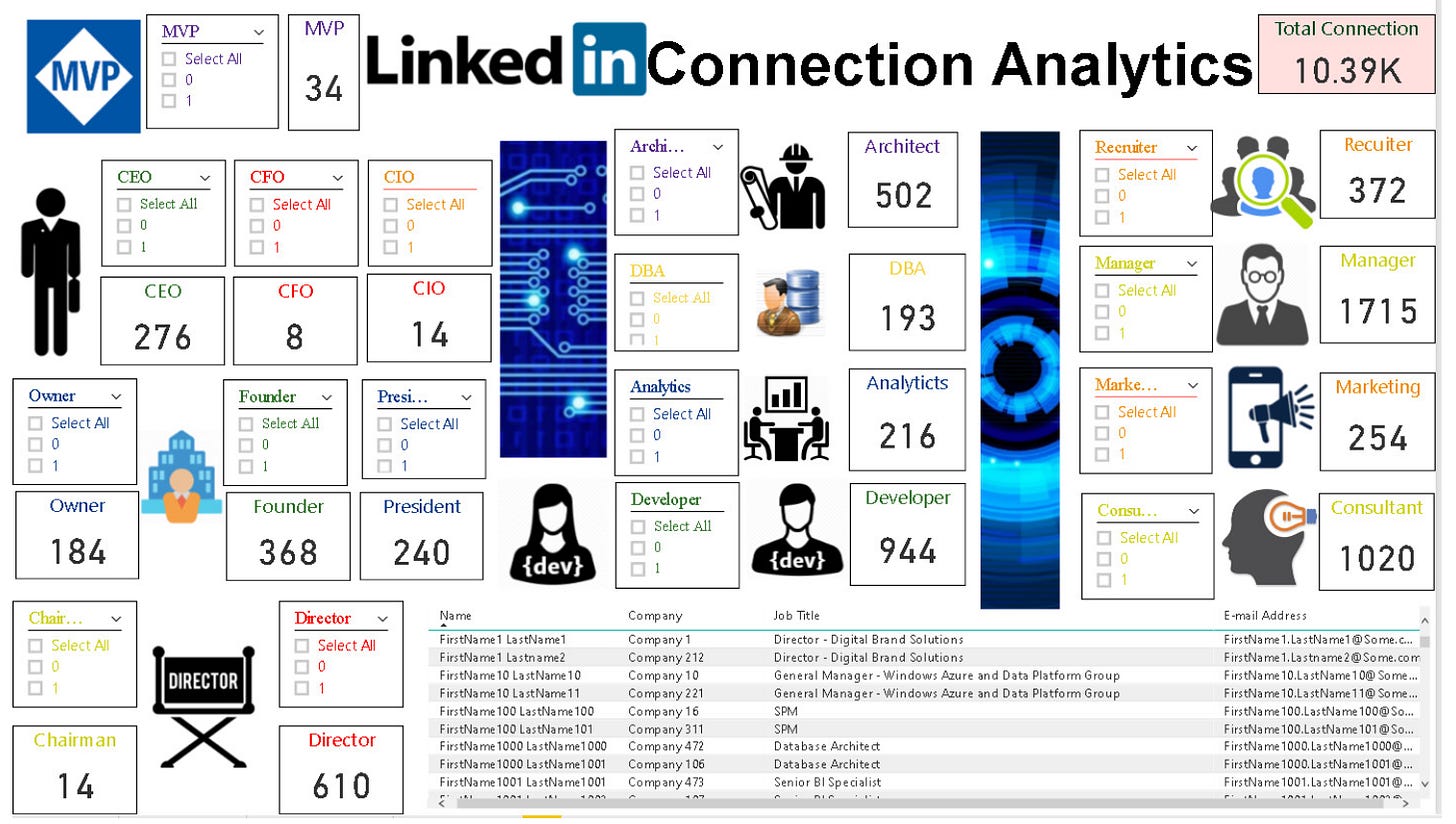

Being the professional network with over 500 million users, LinkedIn holds an enormous amount of data on companies and employees. Whist there is no direct simple to use interface aside from LinkedIn itself, tools are available to extract data directly from LinkedIn for manipulation in other systems. One such example is LinkedIn Connection Analytics Dashboard from Vishal Pawar, Chief Architect at Aptude. This uses a PowerBI template to analyse the data exported from LinkedIn. There’s plenty more information from the link.

Source : https://www.linkedin.com/pulse/brand-new-perform-free-linkedin-connection-analytics-vishal-pawar/

Don’t forget good old data exports from legacy applications. These data can be useful when integrated in an analytics application like PowerBI or Google Analytics, even some basic statistical analysis in tools like Microsoft Excel can provide useful information.

Cloud Applications or Software as a Service (SaaS)

The value of cloud applications goes beyond the initial promises of yesterday. Most SaaS applications like Office 365 and Google Apps were sold on the promise that they entailed no upfront costs and offloaded the day-to-day operations management freeing the client to concentrate on the real value-added aspects of the business. Whilst this is somewhat true, it is incredibly short-sighted. The ‘real’ value of cloud-based apps is their data generation that can be collected, integrated, joined and exploited by all sorts of systems providing value that is greater than the sum of its parts.

Take for example a small business that has fairly standard needs for operations software, accounting, CRM and project planning. In the past the business would purchase a dedicated accounting application like Sage. A dedicated CRM, Salesforce for example and probably use Microsoft Project. These applications created silos that largely prevented the use of information between the systems. In actual fact, a very well remunerated job in the pas was that of a data integrator who had skills to “join” systems in a very basic manner to try to extract value of multiple systems rather than individual ones.

Today, my advice for a small business would be to go completely SaaS but choose the systems that offer data integration APIs or connections. Modern SaaS applications often allow linkage to platforms such as Office 365, CRM software like Hubspot, through mailing list management software (did anyone say MailChimp?) right through to accounting and billing. A client created in the CRM as a prospect should automatically appear in the project management system and be created as a client in the accounting software regardless if it has purchased anything or not. The value generated understanding through the different systems when, why, how, how much etc has enormous potential.

Linking these data sources usually happens through one of two paths, either directly by the applications interface where data connections are exposed directly, i.e., from one application you log in to the other application and grant access to data. The second path is a little more long-winded, but often achieves the same thing and can often provide even more capabilities. There are three well-known systems tax fit this description. Let’s have a quick look at each one.

Microsoft Flow

For a business with significant investment in Microsoft and particularly Office 365 and its components, the obvious choice is Microsoft’s own workflow management tool, Flow. It allows you to turn multi-step repetitive processes in to true workflows rich in data. Not limited to only Microsoft applications, Flow allows links to other SaaS platforms like MailChimp, Facebook, Google and Slack. Microsoft Flow is free to use with options for Premium connections and workflows.

IFTTT

If This Then That is considered the granddaddy of the initial wave of workflow applications and is still one of the most popular. Its real value is in the consumer SaaS space where it allows, like Flow, the linking together of hundreds of different platforms controlling lights to sending emails when there’s a tweet with a particular keyword. IFTTT is free to use.

Zapier

Zapier is probably the most sophisticated and consequently the most complicated to use of the three, but don’t let that discourage you. It’s the most accomplished and reliable workflow application I’ve used. I personally use it to link data between my CRM, Time tracking system, accounting software and various social networks.

As you can see, data is all around us and reasonably easily exploitable with a little help from tools like those I’ve highlighted here. If you want some help with your own needs, get in touch I’d be happy to help.

In the next part I’ll look at more data collection and introduce notions of simple data usage with powerful analytical tools. I look forward to publishing the next issue.

Recommended Reading

Measure what Matters, whilst not strictly about data collection and analysis, is a good book to get you thinking about the right type of data collection strategies with achievable outcomes (Key Results). It has a forward from Larry Page, one of the cofounders of Google who starts off by saying:

I wish I had this book nineteen years ago, when we founded Google.

Reading List

Will Artificial Intelligence Enhance or Hack Humanity? - Wired

A really interesting interview with Yuval Noah Harari, Fei-Fei Li by Nicholas Thompson of Wired.

The Future is Digital Newsletter is intended for a single recipient, but I encourage you to forward it to people you feel may be interested. The more the merrier.

Thanks for being a supporter, have a great day.